You were just scraping away, getting some data, testing some things, maybe not even using the Google search - And then this error pops up:

We're sorry, but your computer or network may be sending automated queries. To protect our users we can't process your request right now.

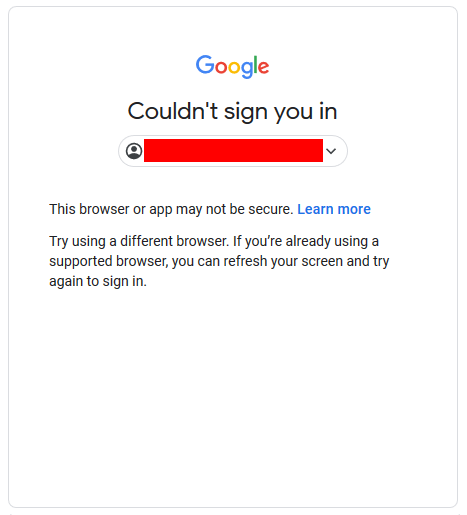

Or this Error:

Sometimes you can fix it through:

- Disabling Two-Factor Authentication for this Google account.

- Allowing less secure apps

- Reinstalling Google Chrome / Updating Google Chrome

- Enabling JavaScript (If you have disabled it or you are using a Browser that doesn’t support it)

- Logging in to StackOverflow (or similar) using Google and then going to your preferred Google service while already logged in.

But sometimes it can be a bit harder when Google has been able to identify devices on your network that appear to be sending automatic traffic to Google.

If you read this support article: "Unusual traffic from your computer network", you will find out that Google considers the following situations as automated traffic:

- Sending searches from a WebDriver driven browser, robot, computer program, automated service, or search scraper

- Using software that sends searches to Google to see how a website or webpage ranks on Google

Now the error page will most likely reveal a reCAPTCHA that you will have to solve before you continue.

Solution

If you are already at that stage, the only way to proceed is to you solve the captcha by hand, using some Captcha Farm or using some Captcha Artificial Intelligence. If you want to find out how to this, you should read this.

Prevention

However, there are several ways to avoid being detected during web-scraping in the first place:

1. Removing Navigator.Webdriver Flag

2. Obfuscating JavaScript of Browser Driver EXE

3. Changing Resolution, User-Agent, and other Details

4. Realistic Page Flow and avoiding Traps

5. Changing your IP Address using Proxy’s

6. Using Random Delays

7. Do not use a Headless Browser

8. Hold cookies

9. Backing Off

You can find out about how to do these in this article about Ways to hide your Bot Automation from Detection.